Vibe Coding: When AI Writes the Code, Who Checks the Quality?

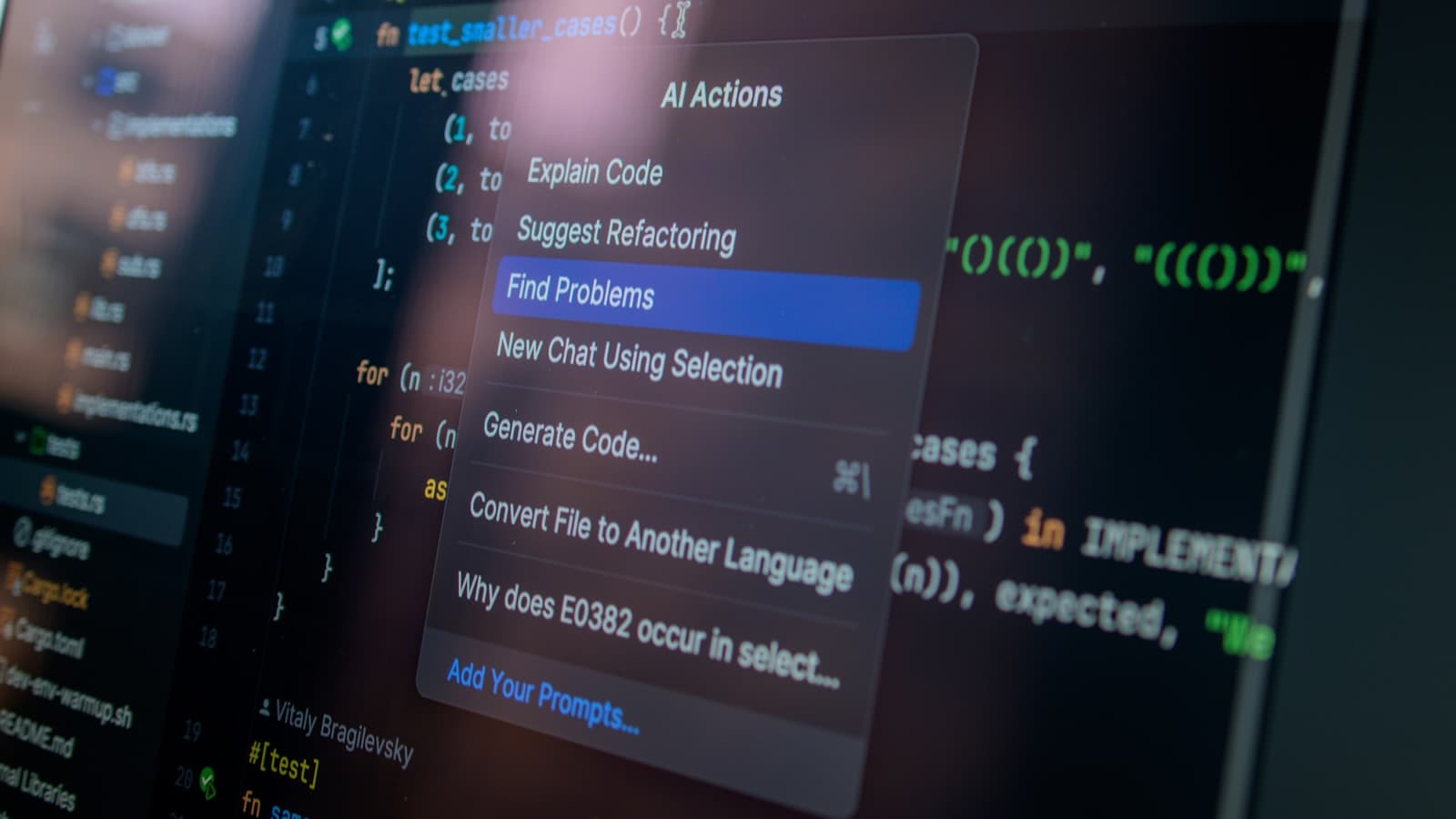

Photo by Daniil Komov on Unsplash

A startup founder proudly presents his new app. Built in three weeks, entirely with AI. It works. For now.

He didn't hire a developer, didn't commission an agency, didn't run sprint planning. Instead, he described what the app should do to an AI assistant in plain language. The code was generated, the app deployed. Investors are impressed. The first funding round is imminent.

What nobody checked: whether the app is secure. Whether it works with 1,000 users. Whether the generated code is maintainable. Whether customer data is protected in compliance with GDPR. Whether the chosen dependencies are still actively maintained.

This is not an isolated case. Since Andrej Karpathy, former head of AI at Tesla and co-founder of OpenAI, coined the term "vibe coding" in early 2025, we have witnessed a new wave in software development. Non-developers are creating functional applications in record time. The results are impressive. The risks are invisible. And the wave has reached mid-sized businesses: CEOs, project managers, and agency owners are experimenting with AI tools, or have employees who already do.

This article explains what lies behind the phenomenon, which risks decision-makers need to understand, and how you can deploy AI-generated software responsibly.

What Is Vibe Coding?

Vibe coding describes a workflow in which software is created through natural language instructions given to AI assistants. Instead of writing code, you describe in everyday language what the software should do. The AI generates the complete code, you test the result, and provide feedback to correct it. The process repeats until the result matches your vision.

The term was coined by Andrej Karpathy, who introduced it on X (formerly Twitter) in February 2025. His description: you surrender to the "vibe," forget that code exists, and accept the result as long as it works. Karpathy himself explicitly emphasized that this approach is meant for throwaway projects and prototypes, not for production systems. That context, however, is regularly lost in the enthusiasm.

The tools for this are now abundant: Cursor, GitHub Copilot, Claude Code, Windsurf, Bolt, Lovable, and Replit Agent, to name just a few. They all make it possible to create software through descriptions rather than programming. Some of these tools specifically target non-developers and promise that programming skills become entirely unnecessary.

An important distinction: Vibe coding is not the same as AI-assisted software development by experienced engineers. When a senior developer uses AI tools, they review every generated code block, understand the architecture, and make deliberate technical decisions. They recognize when the AI suggests an insecure solution and correct it. In vibe coding, this review is absent, either due to lack of knowledge or by conscious choice. That is precisely where the risk lies.

Why Vibe Coding Is So Tempting

The appeal of vibe coding is understandable. For CEOs, project managers, and agency owners, it addresses several pain points that have accompanied software development for years.

Speed. An MVP that previously required three to six months of development time can now be built in days to weeks. Time-to-market shrinks dramatically, and in competitive markets, that can be decisive. Whoever reaches the market first secures attention, early customers, and feedback that guides further development.

Cost. At first glance, the most expensive element of software development disappears: the development team. No recruiting, no salaries, no onboarding time, no team conflicts, no turnover. An AI subscription costs a fraction of what a single developer earns per month. For a company that simply wants to validate an idea, it sounds like a dream.

Accessibility. Domain experts who know their business best can turn their ideas directly into software. The detour through specifications, briefings, and the inevitable gap between "what I meant" and "what was built" seems to vanish. The logistics expert builds their own planning tool. The sales director creates her own dashboard. The CEO prototypes a product idea over the weekend.

Impressive demos. Working prototypes convince stakeholders, investors, and customers. When something works on screen, it looks finished. The interface appears professional, buttons respond, data is displayed. The fact that fundamental problems may lurk beneath the surface remains invisible. This demonstration effect should not be underestimated: it changes expectations across the entire organization.

Independence. Many CEOs have had negative experiences with software projects. Delays, budget overruns, communication problems with developers, results that didn't match expectations. Vibe coding promises independence from these frustrations. You retain control, or at least believe you do.

These advantages are real. But they tell only half the story.

The Systematic Weaknesses of AI-Generated Code

AI models generate code based on statistical patterns. They have analyzed millions of code repositories and produce the most probable next code block. This works surprisingly well for individual functions and isolated tasks. It fails systematically at everything that extends beyond the individual case.

Security

AI models have learned from all publicly available code, including insecure code. And there is plenty of it: if 60% of all public repositories contain a particular security vulnerability, the AI will very likely reproduce that exact insecure pattern. SQL injection, cross-site scripting, missing authentication, unencrypted data transmission, hardcoded passwords: all of these regularly appear in AI-generated code.

The problem is not that AI intentionally writes insecure code. The problem is that it has no context for security. It does not know that your application processes personal data. It does not know that your system needs to be PCI-DSS compliant. It does not know that the API is publicly accessible. It optimizes for "works," not for "is secure."

In practice, this looks like: the AI creates a login system that stores passwords in plaintext because that is how many tutorials demonstrate it. It generates an API without rate limits because the instruction "create an API for user data" says nothing about security. It connects a database without validating inputs because validation was not explicitly requested.

An experienced developer knows that security is not a feature you add on. Security is a property that must be built into the architecture from the start. AI does not have this understanding.

Architecture

AI solves individual problems excellently. It can create a login page, optimize a database query, or validate a form. What it cannot do: design a coherent overall architecture. Every instruction is processed in isolation. The result is software made up of a hundred individually correct building blocks that do not fit together.

Imagine commissioning ten different architects to each design one room of your house, without them speaking to each other. Each room might be functional. But the doors don't match the hallways, the plumbing contradicts itself, and the structural integrity was never calculated as a whole. The house looks impressive in photos. You wouldn't want to live in it.

The same happens with AI-generated code. There is no understanding of scaling, of data flows between components, or of the long-term evolution of the system. The AI does not plan a database structure that accounts for future requirements. It does not design API interfaces that are consistent and versionable. It does not think about what the system will look like in a year when ten new features have been added.

Particularly problematic: the AI makes architectural decisions implicitly, without flagging them as such. It chooses a database model, an authentication strategy, a deployment pattern, without documenting these decisions or explaining their consequences. When you later discover that the chosen architecture does not scale, there is nobody you can ask about the rationale.

Maintainability

Generated code is often redundant. The same logic is implemented in multiple places because the AI starts from scratch with each request and has no overview of the existing codebase. Naming is inconsistent: what is called "user" in one file becomes "customer" in the next and "account" in a third. Structures vary from file to file because each session follows a different approach.

There are no tests. No documentation. No clear separation of responsibilities. No conventions that future developers could follow.

For a prototype, this is acceptable. For a system that needs to be maintained and evolved over years, it is a nightmare. Every change becomes a risk because nobody can foresee the side effects. A bug fix in one place creates errors in three others because the logic was duplicated rather than centralized. New team members who take over the code spend weeks trying to understand the structure, only to discover there is none.

Dependencies

AI assistants incorporate libraries and packages without evaluating their maintenance status, licensing model, or security history. A package that has received no update in two years and contains known security vulnerabilities is included just as readily as one that is actively maintained.

License conflicts that could land your company in legal trouble go undetected. A library with a GPL license in your proprietary product may mean you are required to disclose your entire source code. The AI does not know this and does not check.

On top of that, there is the sheer volume of dependencies. AI-generated code tends to pull in an external library for every minor task rather than implementing simple functions itself. The result is a dependency tree with hundreds of packages, each representing a potential security risk and maintenance burden.

Hidden Costs

Perhaps the most insidious weakness: feature velocity, the speed at which new features can be added, drops dramatically after three to six months. The first weeks feel like magic. New features emerge in minutes. The dashboard looks impressive. Stakeholders are delighted.

Then the struggle begins. Every new feature breaks existing ones. Bug fixes create new bugs. The AI no longer understands its own code because it has grown inconsistently across many sessions. It suggests solutions that contradict other parts of the system. Prompts grow longer and more complex because you must provide the AI with increasing context that it forgets in the next session.

What started as a cost-effective alternative becomes more expensive than professional development from the outset, because you now pay for both: the cost of AI development and the cost of cleanup. There is an expression in the software industry: "Pay me now or pay me later, but later always costs more."

Five Real Risks for Businesses

The technical weaknesses translate into concrete business risks that decision-makers must understand and evaluate.

1. Security and Liability

If AI-generated code inadequately protects personal data, you are liable. GDPR makes no exception for "the AI wrote the code." Managing directors are personally liable for data protection violations. Fines can reach up to 4% of annual revenue. Add to that reputational damage and potential compensation claims from affected customers.

Particularly critical: many security vulnerabilities in AI-generated code are not obvious. An application can "work" for months while simultaneously exposing customer data through insecure API endpoints. You only learn about it when the damage has occurred, whether through an attack, a tip from an attentive user, or the data protection authority.

2. Vendor Lock-in

Vibe coding creates a new form of dependency. The generated code is often tightly coupled to the specific AI tool used to create it. The project history, the prompts, the understanding of the application logic, all of this exists only within the context of that one tool.

If that tool changes its pricing model, ceases operations, or fundamentally alters its API, you face a problem. Without experienced developers who understand the code and can migrate it, you are stuck. And because the code is undocumented and inconsistent, migration is significantly more costly than with professionally developed software.

3. Scaling Is Impossible

An application that works for ten users collapses at a thousand if the architecture is not designed for scale. AI-generated code rarely accounts for caching strategies, database indexes, connection pooling, or asynchronous processing. When your product succeeds and user numbers rise, that is precisely the moment it fails.

This is particularly painful: the success of your product reveals the weakness of your technology. And precisely when expectations are highest. Customers who were enthusiastic in the first weeks become frustrated by loading times, crashes, and outages. The reputation you built erodes faster than it was established.

4. Invisible Technical Debt

Technical debt is like financial debt: it accumulates, is initially invisible, and eventually comes due. The difference with AI-generated code: the debt starts from day one and at a pace that would be nearly impossible to achieve manually.

Professional developers incur technical debt consciously and in a documented manner, as a calculated decision ("we take this shortcut to deliver faster and clean up in sprint 5"). AI-generated code creates technical debt unconsciously and undocumented. When you discover after six months that fundamental architectural decisions were wrong, starting over is often cheaper than remediation. That means: you pay twice.

5. A False Sense of Security

Perhaps the greatest risk: "it works" is equated with "it is production-ready." A functioning prototype on your own laptop is something fundamentally different from a system that performs reliably under load, during attacks, with unexpected input, and after updates.

This confusion leads to prototypes going into production without anyone closing the gap between demo and production. There is a rule of thumb in software development: building a prototype costs 10% of the total effort. Making it production-ready costs the remaining 90%. Vibe coding delivers the first 10% at impressive speed and creates the impression that you are almost done. In reality, the largest share of the work still lies ahead.

When Vibe Coding Works, and When It Does Not

Vibe coding is not inherently bad. It is a tool, and like any tool, it has legitimate use cases and clear boundaries. The problem arises when those boundaries are ignored.

| Use Case | Assessment | Rationale |

|---|---|---|

| Internal tools and automations | Suitable | Limited user base, controlled environment, small attack surface |

| Prototypes and proofs of concept | Suitable | Rapid idea validation, no production requirements |

| Personal projects and experiments | Suitable | No risk to third parties, learning tool |

| Data visualizations and dashboards | Conditionally suitable | Depends on data sensitivity and user base |

| Customer-facing web applications | Dangerous | Security, data protection, scaling required |

| Payment processing and financial data | Very dangerous | Regulatory requirements, high liability risks |

| Health data and sensitive information | Very dangerous | Strict compliance, maximum security requirements |

| Business-critical systems | Very dangerous | Availability, maintainability, and security are business-decisive |

The rule of thumb: the more users, the more sensitive the data, and the higher the availability requirements, the less suitable vibe coding is as a standalone development method.

A prototype that internally demonstrates how an idea could work? Perfect use case. A customer-facing application that processes payment data and underpins the business model? Then you need professional development, even if the prototype looks promising.

What Decision-Makers Should Do Now

If your organization works with AI-generated code or plans to, there are five concrete steps you should take now. None of them require banning vibe coding. It is about using it wisely.

1. Take Inventory

Determine where in your organization AI-generated code is already in use. This concerns not only official projects but also initiatives by employees who "quickly" built an internal tool. A sales representative who created a customer management tool with Bolt. A marketing manager who built a reporting dashboard with Replit Agent.

Ask proactively. In many companies, significantly more AI-generated code exists than leadership realizes. And it may be processing data that falls under GDPR.

2. Assess Risk

Evaluate each identified system: What data does it process? Who uses it? How many people are affected if it goes down? What happens if there is a data breach? What regulatory requirements apply?

Prioritize by risk, not by age or size of the system. A small tool that processes customer data is more critical than a large dashboard that only displays publicly available information.

3. Commission a Code Audit

Have AI-generated code that runs in customer-facing or business-critical systems reviewed by experienced developers. A professional code audit uncovers security vulnerabilities, architectural problems, and technical debt before they become business problems. This is not a luxury investment; it is risk management.

A good code audit answers three questions: Is the code secure? Is it scalable? How much will it cost to make it production-ready? Information on evaluating and quality-assuring AI systems can serve as a useful reference.

4. Establish Technical Leadership

AI-generated code needs technical oversight. This does not necessarily mean a full development team, but at minimum one person with the experience to make architectural decisions, identify security risks, and assess code quality. Someone who asks the right questions before code goes to production.

For many mid-sized companies, an external CTO or technical advisor is the right solution: strategic leadership without the cost of a full-time position. This person can simultaneously guide vibe coding as a prototyping method and ensure the results are developed professionally.

5. Define a Process

Set clear rules: vibe coding as a prototyping tool, yes. As a production method without professional review, no. Establish a process in which AI-generated prototypes are reviewed, reworked, and secured by experienced developers before going to production.

This process does not need to be bureaucratic. It can be as simple as: "Before AI-generated code touches customer data, an experienced developer reviews it." This does not save costs compared to "no oversight at all," but it saves enormous costs compared to a data breach, a system failure, or a complete restart after six months.

The current AI landscape offers numerous ways to integrate AI tools meaningfully into professional development workflows without sacrificing quality assurance. The future lies not in choosing between AI and human expertise, but in the intelligent combination of both.

Conclusion

Vibe coding is a genuine revolution for prototyping and idea validation. The speed at which functional applications can be created was unthinkable two years ago. For organizations that want to quickly test whether an idea has potential, this is an enormous asset. The barrier to entry for software development has never been lower.

For production systems that must be secure, scalable, and maintainable, however, experience, judgment, and technical competence remain essential, and no AI can replace them. The AI writes the code. But whether that code still works in six months, whether it is secure, whether it scales, whether it meets regulatory requirements: only a person who knows what to look for can assess that.

The smart approach to vibe coding looks like this: AI accelerates, humans decide and review. Prototypes emerge in days. Production systems emerge from prototypes that have been reworked, secured, and placed on a solid foundation by experienced developers. This way, you get the best of both worlds: the speed of AI and the experience of people who know what "production-ready" truly means.

The question is not whether you will use AI in your software development. The question is whether you will stay in control.

You have AI-generated code in use and are unsure whether it is production-ready? Contact me for an independent code audit.